Open source tools to build and deploy Computer Vision at scale

The open-source toolset to accelerate your project by 5x and put it to production smoothly

The streamlined path to Computer Vision solutions

It takes a lot of trials, trainings and combinations to develop a Computer Vision solution, with diverse input and output structures, dependencies, sources and libraries incompatibilities.

We have simplified the entire process.

Tailor-made tools to ease the creation of Computer Vision applications

300+ ready-to-use SOTA algorithms

We've carefully curated the best open-source SOTA algorithms, and packaged them so that they respond to the same Python commands.

A growing library focused on vision to provide 300 algorithms in image classification, object detection and segmentation, pose estimation, text detection and recognition…

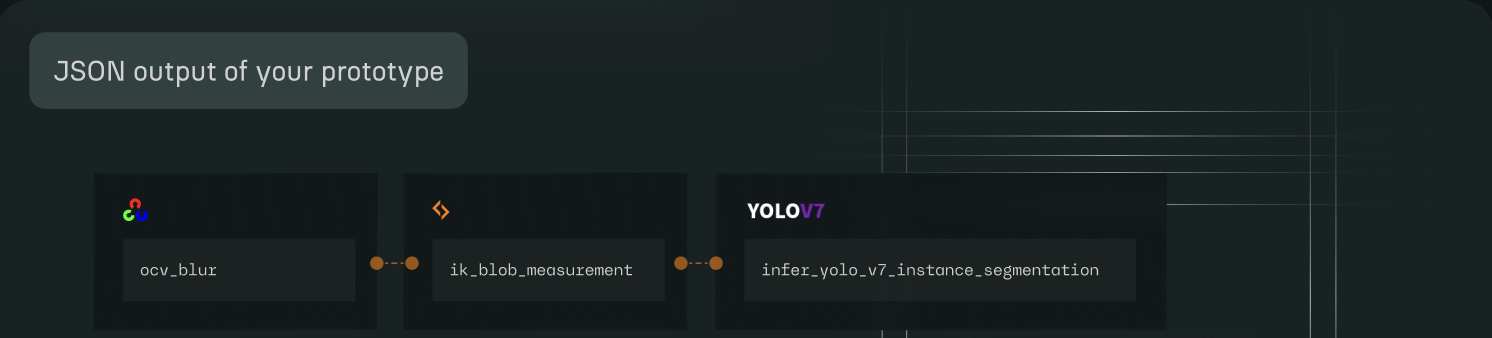

Prototype seamlessly

with the API

Develop Computer Vision solutions without worrying about installation, dependencies and compatibilities.

You can train, test and build workflows by reusing the same code patterns.

Feel free to mix all algorithms from the HUB, inputs and outputs become universally compatible.

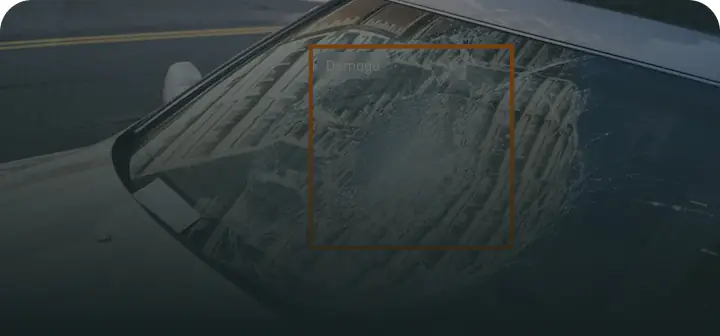

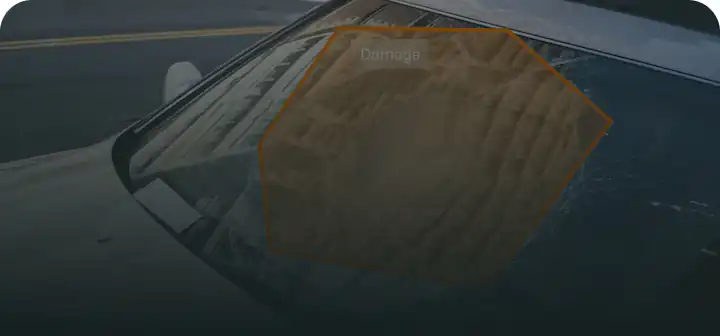

Or adopt STUDIO, the vision desktop application

You would rather use a friendly UI than Python code? We developed the application version of the API for you.

Build inference and training pipelines and watch the results at once in the same window.

Put to production with SCALE [Beta version]

We had a dream that Computer Vision solutions could be deployed for users without setting and maintaining a complex infrastructure.

So we built an automated deployment SaaS platform for engineers to transform projects into businesses without the need for DevOPs knowledge.

Easy access to your preferred libraries and sources

Without installation, dependency or compatibility issues

Build solutions from A to Z...

Test algorithms, chain them in workflows whatever the source or library, create your own algo and train models.

Selection and tests

Find algorithms

Experiment

Adjust settings

Prototyping

Train

Test model

Chain algorithms

Validate

Export your workflow

...then put them to production

with SCALE, the automated Computer Vision deployment SaaS platform. Transform your workflows and models into ready-to-use endpoint APIs in a few clicks.

Your solution is deployed at scale on a robust and secure cloud infrastructure.

Build with Python API

Create with STUDIO app

.svg)